Información de la Tarea

Estudiante: Andrés Cruz Chipol

Curso: Aprendizaje Automático

Fecha de entrega: 19 de Febrero, 2026

Descripción de la Tarea

- Con los mismos datos del problema multiclase con la regresión logística, resolver el problema ahora con MVS.

- Presentar ambas gráficas de las superficies de decisión para poder compararlas.

El problema multiclase con MVS

Se trabajó con el mismo conjunto de datos multiclase usado con regresión logística: dos características (x₁, x₂) y tres clases (0, 1, 2). El objetivo es resolver el problema con Máquinas de Vectores de Soporte (MVS) usando LinearSVC y comparar las superficies de decisión de la regresión logística y de LinearSVC sobre los mismos datos.

Conjunto de Datos

El archivo blobs.csv contiene los datos en formato (x₁, x₂, clase):

3.562767635041874659e-02,-9.829376245870191653e-02,0.000000000000000000e+00-4.152695604241516847e+00,-2.836190214513588437e+00,1.000000000000000000e+00-6.442970057256140137e+00,-2.481140136628053661e+00,1.000000000000000000e+00-4.768761516462166838e+00,-1.214663093369577673e+00,1.000000000000000000e+00-1.004115385022471330e+00,-4.325248830027482505e+00,2.000000000000000000e+00-2.730580059957524508e+00,-4.030046753887877031e+00,2.000000000000000000e+00-2.852371251950764908e+00,-4.396415651210693554e+00,2.000000000000000000e+00-4.206049385907203231e+00,-2.600205003361393707e+00,1.000000000000000000e+00-1.930399130187181012e+00,3.347968644261194893e+00,0.000000000000000000e+00-3.412618064367627380e-01,2.127673221400525172e+00,0.000000000000000000e+00-5.037551761092601943e+00,-3.592446628384896812e+00,1.000000000000000000e+00-4.877034980835113664e+00,-8.471903657696826517e-01,1.000000000000000000e+00-3.642888118246185858e+00,-4.693976116024383138e+00,2.000000000000000000e+00-1.576938246725097414e+00,3.895699535449327122e+00,0.000000000000000000e+00-1.341741362859135922e+00,-5.972974975203115378e+00,2.000000000000000000e+00-2.546105904007627263e+00,-3.863080150976580640e+00,2.000000000000000000e+00-3.299946532650131381e+00,-3.394062645447537108e+00,2.000000000000000000e+00-1.516952653093859293e+00,1.358039292922861296e+00,0.000000000000000000e+00-5.342709927397626402e+00,-1.933077416847355456e+00,1.000000000000000000e+00-6.093768097567592079e+00,-1.807291840622934131e+00,1.000000000000000000e+00-2.969679995209843160e+00,-3.835876960074290132e+00,2.000000000000000000e+00-5.265074757830173091e+00,-1.944059726988243808e+00,1.000000000000000000e+00-3.283641930441098644e+00,-4.373255204682885200e+00,2.000000000000000000e+00-5.107408569171612012e-01,1.953874558944170614e+00,0.000000000000000000e+00-5.374141201916664556e+00,-2.615404681135824916e+00,1.000000000000000000e+00-3.842557865346929447e+00,-6.511451816419160821e+00,2.000000000000000000e+00-3.091076648260287385e+00,-4.176769285595522518e+00,2.000000000000000000e+00-5.296096330184323353e-01,1.850995087928062111e+00,0.000000000000000000e+006.323279840707143329e-01,1.431042249239267150e-01,0.000000000000000000e+00-3.011864754755498641e+00,-5.220955441935164920e+00,2.000000000000000000e+00-1.097668032600275678e+00,2.733600401159766768e+00,0.000000000000000000e+00-1.972298150996399935e+00,1.853902212008703199e+00,0.000000000000000000e+00-4.049535549749097463e+00,-5.073640879962285410e+00,2.000000000000000000e+00-1.021615505335874641e+00,1.315615970336744489e+00,0.000000000000000000e+00-3.371205498677644297e+00,-1.638662577107126594e+00,1.000000000000000000e+00-3.719010863736218031e+00,-4.178359924841167583e+00,2.000000000000000000e+00-3.799938371925043690e+00,-1.791517856197658354e+00,1.000000000000000000e+00-6.371973572073306613e+00,-1.661514881639310603e+00,1.000000000000000000e+00-5.179776553904701153e+00,-2.580594901474859704e+00,1.000000000000000000e+00-3.668885835724902567e+00,-4.195668240086832590e+00,2.000000000000000000e+00-3.251776014706230455e+00,-4.149726756059299859e+00,2.000000000000000000e+00-4.648310273162443274e+00,-3.288957684919034286e+00,1.000000000000000000e+00-1.152197156987767368e+00,1.819190579753165116e+00,0.000000000000000000e+00-6.228914387493512450e+00,-1.426136777705386827e+00,1.000000000000000000e+00-4.800556531699781360e+00,-1.857665627874143466e+00,1.000000000000000000e+00-3.002975767476160129e+00,-3.938912842314636453e+00,2.000000000000000000e+007.181076761853577572e-02,2.705739273323449101e+00,0.000000000000000000e+001.355795453558901631e+00,8.067485989334430840e-01,0.000000000000000000e+00-1.947090301609537555e+00,2.437660632238673131e+00,0.000000000000000000e+00-9.526701784929079153e-01,1.267475500162511981e+00,0.000000000000000000e+00-5.221184394436909848e+00,-2.177432342611599569e+00,1.000000000000000000e+00-2.873391295728659145e+00,-5.704052392928280035e+00,2.000000000000000000e+00-3.454619963916163439e+00,-3.458233790313498091e+00,2.000000000000000000e+00-3.933319283617795925e+00,-3.252608433861614579e+00,2.000000000000000000e+00-7.096210004926306603e-01,2.820448044128999854e+00,0.000000000000000000e+00-3.037229767850909035e+00,-4.251317212054523509e+00,2.000000000000000000e+00-5.618857095774679955e+00,-1.278642239609383502e+00,1.000000000000000000e+00-3.725100321667774050e+00,-9.572524426867623504e-03,1.000000000000000000e+00-7.875662062586669121e-01,2.786060148137402770e+00,0.000000000000000000e+00-3.168501325562509408e-01,1.905152099318865089e+00,0.000000000000000000e+00-4.812294860838266075e+00,-1.566622626473346047e+00,1.000000000000000000e+00-4.179357780083777563e+00,-3.175127160663311354e+00,2.000000000000000000e+00-3.029256278393543056e+00,-2.783388169779760446e+00,2.000000000000000000e+00-5.023473207702329191e+00,-2.751835892850762022e+00,1.000000000000000000e+00-6.856838116273302752e+00,-7.405102432287820058e-01,1.000000000000000000e+00-2.200983979241277311e+00,-4.363921915788041339e+00,2.000000000000000000e+00-1.584177893970912576e+00,3.456113089654868631e+00,0.000000000000000000e+00-4.804999847074864050e+00,-3.763066331848590185e+00,2.000000000000000000e+00-1.002208160524695524e+00,1.325386516500209000e+00,0.000000000000000000e+00-4.575361897762437735e+00,-1.899334205333042869e+00,1.000000000000000000e+00-4.595364610035750808e+00,-1.383095750444535366e+00,1.000000000000000000e+00-4.258299800730275919e+00,-2.930374875489536901e+00,1.000000000000000000e+00-2.930121811533240361e+00,-3.656331848664962969e+00,2.000000000000000000e+009.203460900245152843e-03,3.134347015725138075e+00,0.000000000000000000e+008.300222241356107755e-01,2.945289094998916113e+00,0.000000000000000000e+00-4.683221304584945344e+00,-3.998875489505605341e+00,1.000000000000000000e+00-2.663554934823001652e+00,-3.326202412447014201e+00,2.000000000000000000e+00-3.488772098650480213e+00,-4.302928294825627553e+00,2.000000000000000000e+00-1.357951705237715467e+00,1.130276312265410255e+00,0.000000000000000000e+00-5.445984816612549295e+00,-7.521665688761034474e-01,1.000000000000000000e+003.018494344771671667e-01,3.723061750843779549e+00,0.000000000000000000e+00-6.198124284161736774e+00,-1.113328956137580761e+00,1.000000000000000000e+00-4.838819182378720107e+00,-1.100505352565377404e+00,1.000000000000000000e+00-1.627782383487888174e+00,-2.965557353751517855e+00,2.000000000000000000e+00-1.521440704699568824e+00,1.806491407565603335e+00,0.000000000000000000e+003.039894893611776450e-01,1.103353667107549896e+00,0.000000000000000000e+00-6.388644683067937757e-01,4.303500070900422969e+00,0.000000000000000000e+007.107599629015204368e-02,1.519517075247247551e+00,0.000000000000000000e+00-5.305060264454922958e+00,-1.148699631074356020e+00,1.000000000000000000e+00-1.501026083811078937e+00,2.190580335502679610e+00,0.000000000000000000e+00-2.372102521835099509e+00,-3.707121335936298667e+00,2.000000000000000000e+00-7.789721981982308252e-01,1.566249287852227390e+00,0.000000000000000000e+00-1.038674186349037853e+00,2.789868125603778282e+00,0.000000000000000000e+00-5.669518538115581485e+00,-1.599110487360682953e+00,1.000000000000000000e+00-3.877438543590886688e+00,-1.567773735744774521e+00,1.000000000000000000e+00-2.493616489969455507e+00,-1.889634405337764722e+00,2.000000000000000000e+00-3.515031683528864637e+00,-5.198632781058910801e+00,2.000000000000000000e+00-5.441926277200009876e-01,3.088386098692308845e+00,0.000000000000000000e+00-2.721489419025313605e+00,-3.032171957604763435e+00,2.000000000000000000e+009.150318112422202166e-01,1.442038033526477969e+00,0.000000000000000000e+00- Características: 2 variables (x₁, x₂)

- Clases: 3 categorías (0, 1, 2)

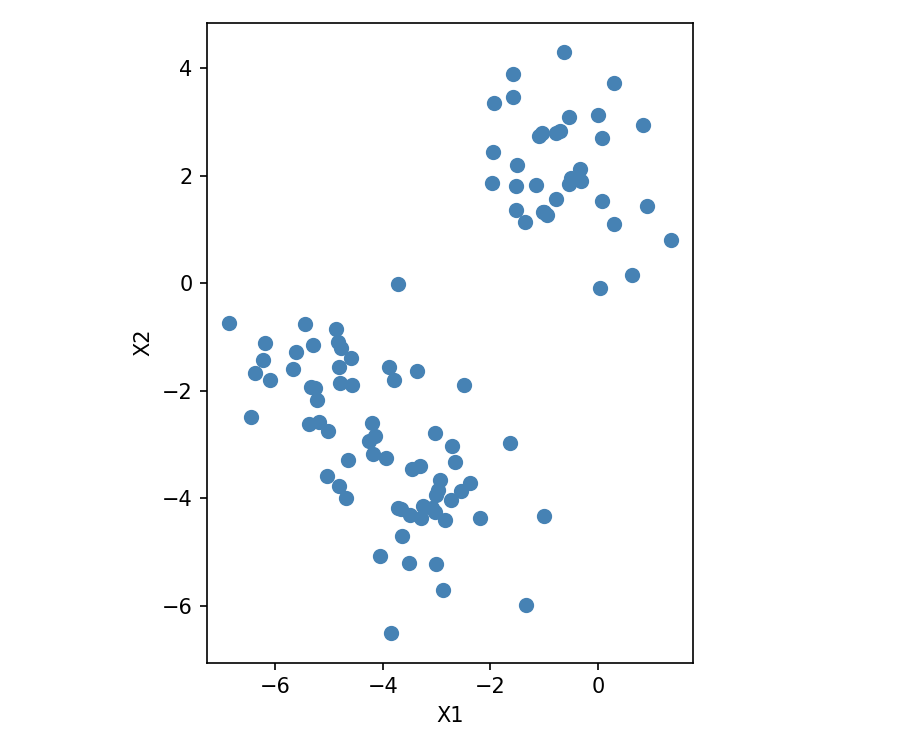

Visualización de los datos

Para visualizar la distribución de los puntos en el plano (x₁, x₂) se usó el siguiente código:

import numpy as npimport matplotlib.pyplot as plt

D = np.loadtxt("blobs.csv", delimiter=',')X = D[:, 0:2]

fig, ax = plt.subplots(figsize=(6, 5))ax.scatter(X[:, 0], X[:, 1], c='steelblue', s=40)ax.set_xlabel("X1")ax.set_ylabel("X2")ax.set_aspect('equal')

plt.tight_layout()plt.savefig("grafica_datos.png", dpi=150)plt.show()

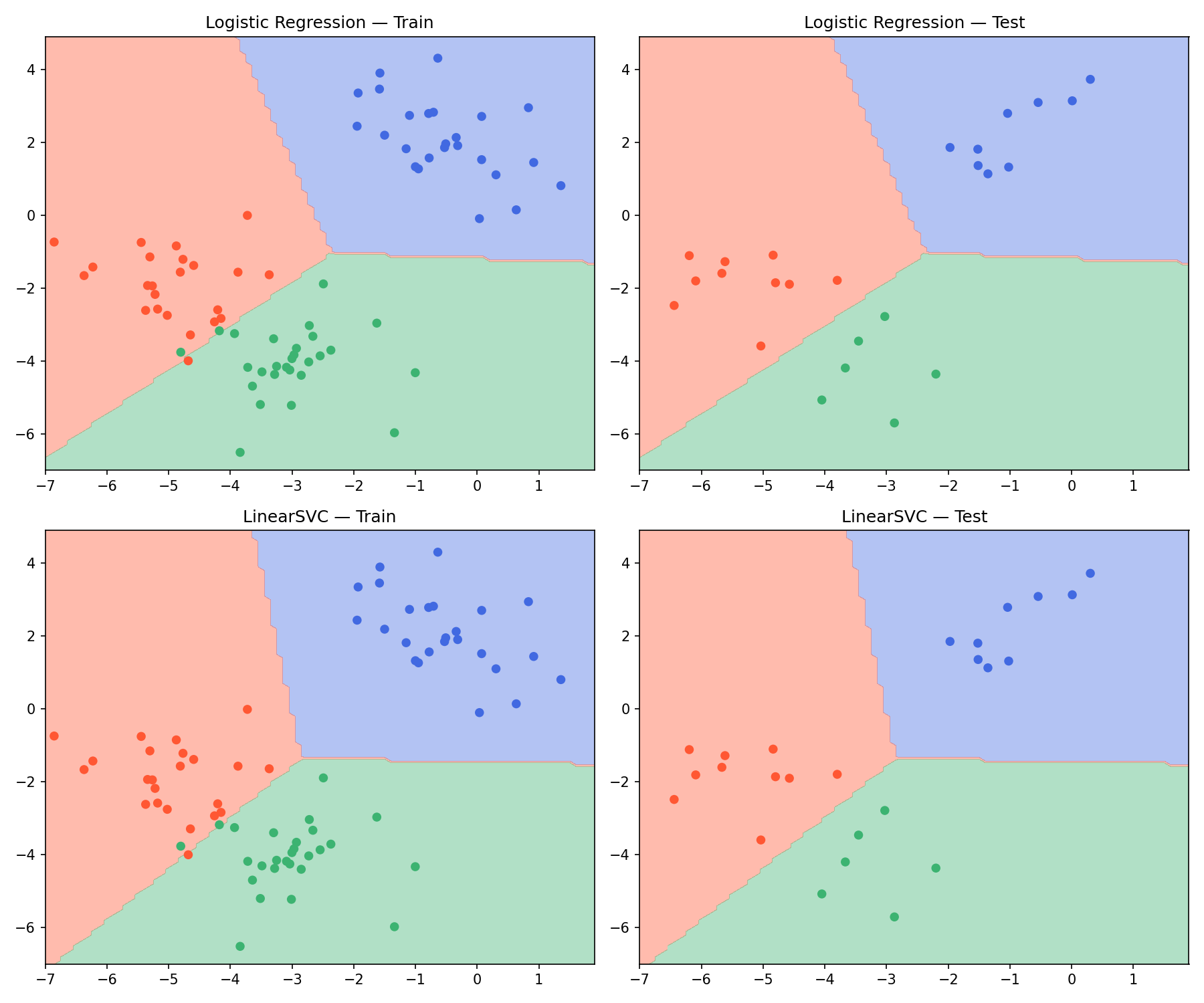

Comparación: Regresión Logística vs LinearSVC (MVS)

Se implementó un flujo común para ambos clasificadores: división train/test, entrenamiento y evaluación con exactitud y precisión (macro). Para LinearSVC se utilizó MinMaxScaler en el rango (-1, 1) y multi_class='ovr' (One-vs-Rest). Las superficies de decisión se obtienen prediciendo sobre una malla del espacio de características. La figura muestra una cuadrícula 2×2: por fila, cada modelo (Regresión Logística y LinearSVC) con sus datos de entrenamiento a la izquierda y de prueba a la derecha.

Código de comparación

import numpy as npimport matplotlib.pyplot as pltfrom matplotlib.colors import ListedColormapfrom sklearn.model_selection import train_test_splitfrom sklearn.linear_model import LogisticRegressionfrom sklearn.preprocessing import MinMaxScalerfrom sklearn.svm import LinearSVCfrom sklearn.metrics import accuracy_score, precision_score

class Classifier:

def __init__(self, name, estimator, scaler=None): self.name = name self.estimator = estimator self.scaler = scaler

def fit(self, X_train, y_train, X_test, y_test): self.X_train, self.y_train = X_train, y_train self.X_test, self.y_test = X_test, y_test X_fit = self.scaler.fit_transform(X_train) if self.scaler is not None else X_train self.estimator.fit(X_fit, y_train) self.train_metrics = self._metrics(X_train, y_train) self.test_metrics = self._metrics(X_test, y_test) acc_tr, prec_tr = self.train_metrics acc_te, prec_te = self.test_metrics print(f"{self.name}: Train Acc={acc_tr:.2f} Prec={prec_tr:.2f} | Test Acc={acc_te:.2f} Prec={prec_te:.2f}")

def predict(self, X): X_in = self.scaler.transform(X) if self.scaler is not None else X return self.estimator.predict(X_in)

def _metrics(self, X, y): pred = self.predict(X) return ( accuracy_score(y, pred), precision_score(y, pred, average='macro', zero_division=0), )

def plot(self, axis, grid_x, grid_y, data='both'): cmap = ListedColormap(['royalblue', '#FF5733', 'mediumseagreen']) grid_points = np.c_[grid_x.ravel(), grid_y.ravel()] z = self.predict(grid_points).reshape(grid_x.shape) axis.contourf(grid_x, grid_y, z, levels=[-.5, .5, 1.5, 2.5], cmap=cmap, alpha=0.4) if data in ('both', 'train'): axis.scatter(self.X_train[:, 0], self.X_train[:, 1], c=self.y_train, cmap=cmap, s=30) if data in ('both', 'test'): axis.scatter(self.X_test[:, 0], self.X_test[:, 1], c=self.y_test, cmap=cmap, s=30) axis.set_title(f"{self.name} — {'Train' if data == 'train' else 'Test' if data == 'test' else 'Train + Test'}")

data = np.loadtxt("blobs.csv", delimiter=',')X, y = data[:, 0:2], data[:, 2]X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=1)

# Logistic Regression: en sklearn 1.8+ OvR es el comportamiento por defectolr = LogisticRegression()models = [ Classifier("Logistic Regression", lr), Classifier("LinearSVC", LinearSVC(C=1, multi_class='ovr', random_state=1), MinMaxScaler(feature_range=(-1, 1))),]for m in models: m.fit(X_train, y_train, X_test, y_test)

# Graficar: fila 0 = LR (entrenamiento | prueba), fila 1 = LinearSVC (entrenamiento | prueba)grid_x, grid_y = np.meshgrid(np.arange(-7, 2, 0.1), np.arange(-7, 5, 0.1))fig, axes = plt.subplots(2, 2, figsize=(12, 10))for i, m in enumerate(models): m.plot(axes[i, 0], grid_x, grid_y, data='train') m.plot(axes[i, 1], grid_x, grid_y, data='test')

plt.tight_layout()plt.savefig('comparacion_superficies.png', dpi=150)print("\nFigure saved: comparacion_superficies.png")plt.show()Resultados de métricas

Al ejecutar el script se obtiene:

Logistic Regression: Train Acc=0.96 Prec=0.96 | Test Acc=1.00 Prec=1.00LinearSVC: Train Acc=0.97 Prec=0.97 | Test Acc=1.00 Prec=1.00Ambos modelos alcanzan 100% de exactitud y precisión en el conjunto de prueba; en entrenamiento LinearSVC obtiene ligeramente mejor ajuste (0.97 frente a 0.96).

Gráficas de las superficies de decisión

Interpretación:

- Fila 0 (Regresión Logística): Columna izquierda = solo datos de entrenamiento; columna derecha = solo datos de prueba. En sklearn, con 3+ clases y el solver por defecto, se usa regresión multinomial (softmax): un único modelo considera todas las clases a la vez.

- Fila 1 (LinearSVC): Misma disposición (entrenamiento | prueba). Se usa

LinearSVCconmulti_class='ovr'(One-vs-Rest) y MinMaxScaler en el rango (-1, 1). - Los colores (azul, naranja, verde) corresponden a las tres clases. Así se comparan las superficies de ambos modelos por separado en train y en test.

Conclusiones

Se resolvió el problema multiclase con regresión logística (multinomial por defecto en sklearn) y con MVS mediante LinearSVC configurado con multi_class='ovr'. Ambos producen fronteras lineales entre las tres clases; en este dataset alcanzan 100% de exactitud y precisión en el conjunto de prueba. El uso de MinMaxScaler para LinearSVC mejora la estabilidad cuando las escalas de las características difieren. La disposición en cuadrícula 2×2 (entrenamiento | prueba por modelo) permite comparar de forma directa las superficies de decisión de cada clasificador en train y en test sobre los mismos datos.