Información de la Tarea

Estudiante: Andrés Cruz Chipol

Curso: Aprendizaje Automático

Fecha de entrega: Viernes 30 de Enero, 2026

Descripción de la Tarea

- Realizar el clasificador para los datos.

- Realizar las gráficas de los datos de entrenamiento y de prueba con la función de decisión encima de ellas.

Regresión Logística

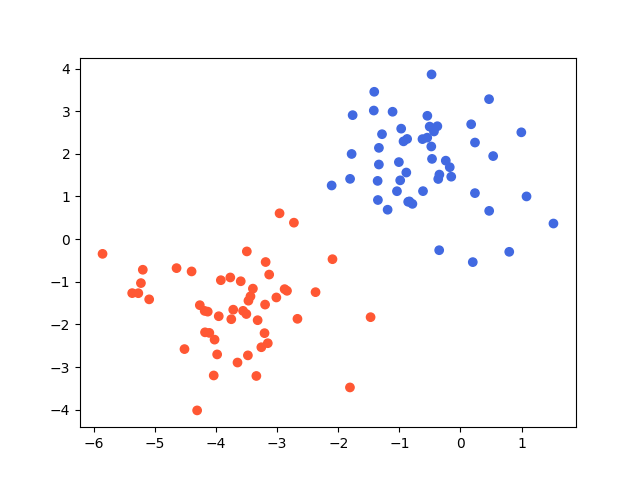

Se trabajó con un conjunto de datos de clasificación binaria generado sintéticamente con dos características (x₁, x₂) y dos clases bien diferenciadas. El objetivo es entrenar un clasificador de regresión logística que sea capaz de separar ambas clases mediante una frontera de decisión lineal.

2.015836669452705232e-01,-5.389427493430178906e-01,0.000000000000000000e+00-3.152924353876207508e+00,-2.440855359777268418e+00,1.000000000000000000e+00-2.107937767808997442e+00,1.258130084590813436e+00,0.000000000000000000e+00-4.337292270150245876e-01,2.524607127849289423e+00,0.000000000000000000e+00-1.470759294654842897e+00,-1.829974196660741903e+00,1.000000000000000000e+00-3.197223969589896075e+00,-1.534772120521136207e+00,1.000000000000000000e+00-3.319015161583136475e+00,-1.901141017843952952e+00,1.000000000000000000e+00-3.206278135541893448e+00,-2.204870148625073689e+00,1.000000000000000000e+00-1.764443139592329235e+00,2.907319657376878919e+00,0.000000000000000000e+00-1.753058158419109613e-01,1.687024234516208976e+00,0.000000000000000000e+00-4.037780510727292160e+00,-3.197111773648576794e+00,1.000000000000000000e+00-5.420026913879710806e-01,2.892079855449184222e+00,0.000000000000000000e+00-4.109532027878556981e+00,-2.198701482657642536e+00,1.000000000000000000e+00-1.410982256130245638e+00,3.455050548565011148e+00,0.000000000000000000e+00-1.808385272491507489e+00,-3.477700341836374331e+00,1.000000000000000000e+00-3.012749813639998830e+00,-1.367805517609840038e+00,1.000000000000000000e+00-3.766590442282502949e+00,-8.987880120807967277e-01,1.000000000000000000e+00-1.350996662499007517e+00,9.173903060385452113e-01,0.000000000000000000e+00-1.007677637950483707e+00,1.806192804371511640e+00,0.000000000000000000e+00-5.093996847202282297e+00,-1.411956985886613669e+00,1.000000000000000000e+00-3.436323904842214727e+00,-1.340602326707549530e+00,1.000000000000000000e+00-4.265303507464863308e+00,-1.548724872251923346e+00,1.000000000000000000e+00-3.750285840073470212e+00,-1.877980571316144598e+00,1.000000000000000000e+00-3.447848663223094245e-01,1.513225572059854640e+00,0.000000000000000000e+00-1.039108912469522306e+00,1.123865540083042402e+00,0.000000000000000000e+00-4.309201774979301014e+00,-4.016177183052420219e+00,1.000000000000000000e+00-3.557720557892658952e+00,-1.681494652228781694e+00,1.000000000000000000e+00-3.636536424235805032e-01,1.410346101043746136e+00,0.000000000000000000e+007.982839746655661095e-01,-2.975447619603892591e-01,0.000000000000000000e+00-3.478508664387870208e+00,-2.725680808568424318e+00,1.000000000000000000e+00-9.317120420054239016e-01,2.292951414275450794e+00,0.000000000000000000e+00-1.806342160401548158e+00,1.413253225124387225e+00,0.000000000000000000e+00-4.516179459381469030e+00,-2.578366246595544808e+00,1.000000000000000000e+00-8.556595147410228641e-01,8.749669834524285150e-01,0.000000000000000000e+00-2.371434248312334514e+00,-1.243327722370806132e+00,1.000000000000000000e+00-4.185654773368590043e+00,-1.683085291474426981e+00,1.000000000000000000e+005.350939175220990052e-01,1.947752365021208742e+00,0.000000000000000000e+00-5.372202321707996830e+00,-1.266180026902990141e+00,1.000000000000000000e+00-4.180005303539391370e+00,-2.185260046738539241e+00,1.000000000000000000e+00-4.135529745357273690e+00,-1.700393606720091766e+00,1.000000000000000000e+00-3.718419924338602023e+00,-1.654452122692559701e+00,1.000000000000000000e+00-3.648539022797133491e+00,-2.893622830182713379e+00,1.000000000000000000e+00-9.862411663929154804e-01,1.378541592868849142e+00,0.000000000000000000e+00-5.229143137128202667e+00,-1.030801922969066364e+00,1.000000000000000000e+00-4.655242422526382207e-01,1.881604593344723630e+00,0.000000000000000000e+00-3.469619677108531697e+00,-1.443638208947895851e+00,1.000000000000000000e+002.377667582133875523e-01,2.265090286439133127e+00,0.000000000000000000e+001.521751444153753408e+00,3.660996120491271100e-01,0.000000000000000000e+00-1.781134311014685778e+00,1.997011645354356935e+00,0.000000000000000000e+00-7.867141878980561387e-01,8.268265132781960070e-01,0.000000000000000000e+00-8.861521049897672642e-01,1.561837878607267305e+00,0.000000000000000000e+00-3.340035205361030712e+00,-3.208777759561539433e+00,1.000000000000000000e+00-3.921263873548535006e+00,-9.629591569467573775e-01,1.000000000000000000e+00-4.399963193250167492e+00,-7.573338004948741986e-01,1.000000000000000000e+00-5.436650098977788836e-01,2.379799057244683880e+00,0.000000000000000000e+00-3.503873677483280602e+00,-1.756042578687782907e+00,1.000000000000000000e+00-1.283824806327537260e+00,2.460627981609483594e+00,0.000000000000000000e+00-2.725329071302464268e+00,3.857623303094528389e-01,1.000000000000000000e+00-6.216102156638151355e-01,2.345411161253086796e+00,0.000000000000000000e+00-1.508941419613991641e-01,1.464503112434549115e+00,0.000000000000000000e+00-4.772625713911237133e-01,2.172647594745521271e+00,0.000000000000000000e+00-4.646001689716149130e+00,-6.798525272965708632e-01,1.000000000000000000e+00-3.495900188025914623e+00,-2.881135364130200660e-01,1.000000000000000000e+00-4.023701957337019408e+00,-2.356501038114441560e+00,1.000000000000000000e+00-5.857066865907992970e+00,-3.451753884924615434e-01,1.000000000000000000e+00-2.667627888873648878e+00,-1.868647282421300737e+00,1.000000000000000000e+00-1.418221903376060800e+00,3.015464102770552657e+00,0.000000000000000000e+00-5.271643756707234729e+00,-1.267791698481849583e+00,1.000000000000000000e+00-8.362521699298437472e-01,8.847375296158930258e-01,0.000000000000000000e+00-2.403296083152950957e-01,1.839936015885824228e+00,0.000000000000000000e+00-3.595593359670441025e+00,-9.877608957082149033e-01,1.000000000000000000e+00-3.258528550364966136e+00,-2.535040020753216439e+00,1.000000000000000000e+00-3.396765721165611929e+00,-1.161057215298222367e+00,1.000000000000000000e+001.751594514950969295e-01,2.693698028840822101e+00,0.000000000000000000e+009.959782147304625521e-01,2.504640108114600139e+00,0.000000000000000000e+00-3.481890151378027598e-01,-2.596052682867382444e-01,0.000000000000000000e+00-3.130198844455373219e+00,-8.309277790802737096e-01,1.000000000000000000e+00-3.955416008282851781e+00,-1.807653661458886951e+00,1.000000000000000000e+00-1.191995714642863691e+00,6.896273253810942805e-01,0.000000000000000000e+00-1.110952527165406156e+00,2.987103652342763649e+00,0.000000000000000000e+004.678054250720189433e-01,3.282412763959463575e+00,0.000000000000000000e+00-5.198353033796426992e+00,-7.179941014012602984e-01,1.000000000000000000e+00-5.037868929315775235e-01,2.638764868653489692e+00,0.000000000000000000e+00-2.094426293120259963e+00,-4.702827203847772530e-01,1.000000000000000000e+00-1.355484714104717048e+00,1.365842420681287361e+00,0.000000000000000000e+004.699454799560294216e-01,6.627046802232339218e-01,0.000000000000000000e+00-4.729084777119419991e-01,3.862851084016106995e+00,0.000000000000000000e+002.370319868850038203e-01,1.078868088362931577e+00,0.000000000000000000e+00-9.700279750077798191e-01,2.590570590144511076e+00,0.000000000000000000e+00-1.335070093216227161e+00,1.749931348618363414e+00,0.000000000000000000e+00-2.838746431467471076e+00,-1.211846702569558065e+00,1.000000000000000000e+00-6.130162076033790486e-01,1.125600300967911416e+00,0.000000000000000000e+00-8.727181957541860768e-01,2.349219138719462308e+00,0.000000000000000000e+00-1.334486248668438568e+00,2.140159733858184143e+00,0.000000000000000000e+00-2.877667293225576906e+00,-1.172438881008454059e+00,1.000000000000000000e+00-2.960260399601827075e+00,6.056402280289758799e-01,1.000000000000000000e+00-3.981675593161236204e+00,-2.703358147692170199e+00,1.000000000000000000e+00-3.782366371251492110e-01,2.647737111807992871e+00,0.000000000000000000e+00-3.188133328657685173e+00,-5.368973242380230548e-01,1.000000000000000000e+001.080987801837071993e+00,1.001389046642161995e+00,0.000000000000000000e+00Conjunto de Datos

El dataset consta de 100 puntos de datos distribuidos en dos grupos con las siguientes características:

- Características: 2 variables independientes (x₁, x₂)

- Clases: 2 categorías (0 y 1)

Visualización de los Datos Completos

Los datos muestran dos grupos claramente separables, lo que sugiere que un clasificador lineal puede lograr una una bunea separacion entre las dos clases.

generamos la visualizacion con este codigo:

import matplotlib.pyplot as pltimport numpy as npfrom matplotlib.colors import ListedColormap

file = np.loadtxt("blobs.csv", delimiter=',')

X = file[:,0:2]y = file[:,2]

fig, ax = plt.subplots()

cmap = ListedColormap(['royalblue','#FF5733'])

ax.scatter(file[:,0], file[:,1], c=y, cmap=cmap)

plt.show()2. Entrenamiento del Clasificador

Implementación completa del clasificador de regresión logística:

import numpy as npfrom sklearn.model_selection import train_test_splitfrom sklearn.linear_model import LogisticRegression

# Leer datosdata = np.loadtxt("blobs.csv", delimiter=',')X = data[:,0:2]y = data[:,2]

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=1)

logistic = LogisticRegression()logistic.fit(X_train, y_train)

exact = logistic.score(X_test, y_test)print("Exactitud:", exact)

# Modeloprint(logistic.coef_)print(logistic.intercept_)Resultados:

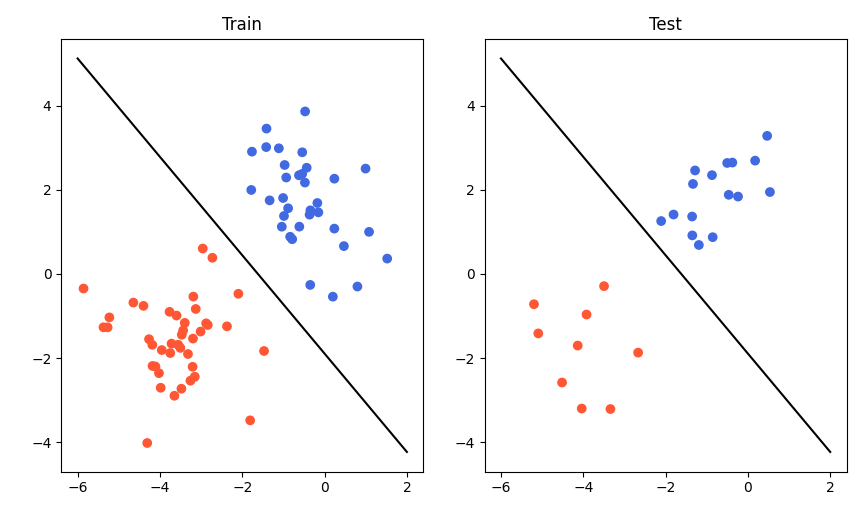

Exactitud: 1.0[[-1.72827643 -1.35557446]][-2.79680024]3. Visualización de la Frontera de Decisión

Este código visualiza los datos de entrenamiento y prueba con la frontera de decisión:

import numpy as npimport matplotlib.pyplot as pltfrom sklearn.model_selection import train_test_splitfrom matplotlib.colors import ListedColormap

data = np.loadtxt("blobs.csv", delimiter=',')X = data[:,0:2]y = data[:,2]

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=1)

vx = np.linspace(-6, 2, 10)vy = (1.72827643 * vx - (-2.79680024)) / (-1.4788485)

fig, [ex1, ex2] = plt.subplots(ncols=2, nrows=1)

cmap = ListedColormap(['royalblue','#FF5733'])

ex1.scatter(X_train[:,0], X_train[:,1], c=y_train, cmap=cmap)ex1.plot(vx, vy, color='black')ex1.set_title("Train")

ex2.scatter(X_test[:,0], X_test[:,1], c=y_test, cmap=cmap)ex2.plot(vx, vy, color='black')ex2.set_title("Test")plt.tight_layout()plt.show()

Explicación de la Frontera de Decisión:

La frontera de decisión se obtiene de la ecuación:

w₁·x₁ + w₂·x₂ + b = 0Despejando x₂:

x₂ = -(w₁·x₁ + b) / w₂Donde:

- w₁, w₂ son los coeficientes del modelo

- b es el intercepto

- La línea resultante separa las dos clases

La frontera de decisión es una línea recta que divide el espacio de características en dos regiones:

- Región superior izquierda: Predicción de clase 1 (rojo naranja)

- Región inferior derecha: Predicción de clase 0 (azul real)

La exactitud perfecta (100%) indica que:

- Los datos son linealmente separables

- El modelo ha encontrado la frontera óptima

- No hay sobreajuste (el modelo generaliza bien al conjunto de prueba)

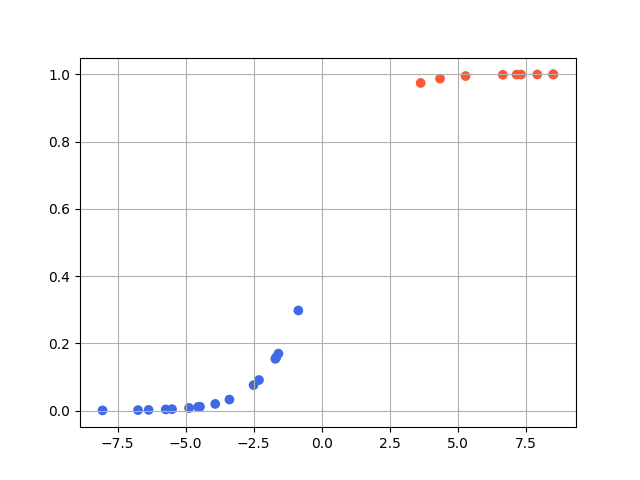

4. Análisis de la Función Sigmoide

Este código visualiza cómo la función sigmoide transforma los valores de decisión en probabilidades:

import numpy as npimport matplotlib.pyplot as pltfrom sklearn.model_selection import train_test_splitfrom matplotlib.colors import ListedColormap

cmap = ListedColormap(['royalblue','#FF5733'])

# Leer datosfile = np.loadtxt("blobs.csv", delimiter=',')

X = file[:,0:2]y = file[:,2]

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=1)

va = np.array([[-1.72827643, -1.35557446]])s = -2.79680024

def decision(x): v = x @ va.T + s if v > 0.0: return 1, v else: return 0, v

n, m = X_test.shapevx = np.zeros(n)vy = np.zeros(n)

i = 0while i < n: v, v1 = decision(X_test[i]) vx[i] = v1[0] vy[i] = 1/(1+np.exp(-v1[0])) i += 1

fig, ex = plt.subplots()ex.scatter(vx, vy, c=y_test, cmap=cmap)ex.grid()plt.show()Explicación:

decision(): Calcula el valor de la función de decisión w·x + b1/(1+np.exp(-v)): Función sigmoide que convierte el valor de decisión en probabilidad- La gráfica muestra cómo puntos de diferentes clases se mapean a diferentes rangos de probabilidad

Esta gráfica ilustra la transformación de los valores de decisión en probabilidades:

Interpretación:

- Los puntos azules (clase 0) tienen valores de decisión negativos y probabilidades < 0.5

- Los puntos rojos (clase 1) tienen valores de decisión positivos y probabilidades > 0.5

- La separación clara entre ambos grupos confirma la clasificación perfecta

Limitaciones

Aunque el modelo funciona perfectamente para estos datos, es importante notar que:

- La regresión logística solo puede aprender fronteras lineales

- Si los datos no fueran linealmente separables, la exactitud sería menor

- Para problemas más complejos, se requerirían modelos no lineales (SVM con kernel, redes neuronales, etc.)

Conclusiones

La regresión logística destaca como un clasificador fundamental y eficiente para problemas binarios, logrando en este caso una exactitud del 100% gracias a la clara separación lineal de los datos. Mediante el uso de la función sigmoide, el modelo transforma la frontera de decisión en probabilidades que cuantifican la confianza de cada predicción, demostrando además una excelente capacidad de generalización al evitar el sobreajuste. Si bien en escenarios reales con datos no separables sería necesario recurrir a la regularización o métricas de evaluación más profunda.